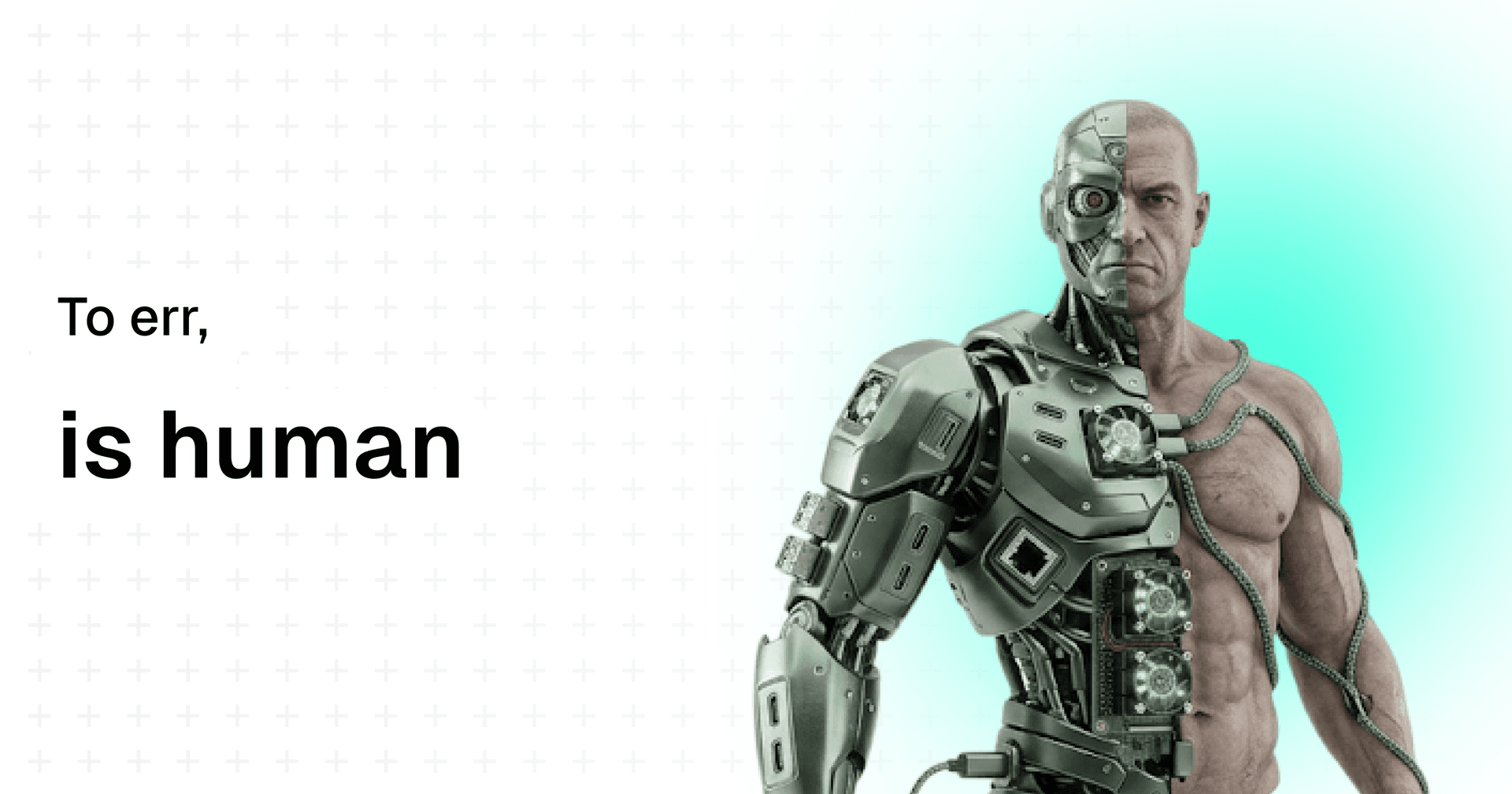

To err is human

In a world of perfect AI, our imperfections are what make us irreplaceable.

TL;DR

- AI optimizes for correctness; humans create meaning through imperfection

- Failure builds trust, vulnerability, and relatability — things AI cannot replicate

- The willingness to err is where courage, creativity, and progress live

- In the age of artificial intelligence, being human is our actual competitive advantage

We're entering a wild time in human history. On the surface, it feels like the machines are finally winning. AI doesn't forget. It doesn't stumble. It doesn't confuse its words, or second-guess itself in the middle of a conversation, or send an awkward text that doesn't let it sleep at night. It does what it's asked to, fast, clean, perfect(?).

And yet… perfection has never really been the thing that made us human.

If anything, our lives are shaped by imperfection. By the stumble that makes us rethink our footing. By the failure that forces us to reimagine the path. By the mistake that opens a door we didn't even know existed.

Penicillin was discovered because of a lab accident. Post-it notes were born from a failed attempt at making super glue. Jazz improvisation thrives on notes that shouldn't belong together, but somehow do. This is what Steve Jobs referred to as 'connecting the dots backward' in his most famous convocation address.

AI will always aim for the correct answer, to be a helpful assistant. Humans, in contrast, thrive in the chaos and the unknown.

Key insight The history of human innovation is not a story of precision — it's a story of happy accidents, stubborn persistence, and the courage to be wrong.

The fabric of failure

Think about the people you trust the most. Do you trust them because they're perfect? Or are they people who've shown you their cracks, who've admitted when they didn't know, or who've failed and then found a way to rise?

There's something deeply connective about failure and mistakes. It makes us relatable. It tells us, "this person is like me." When a leader accepts their mistake openly, it gives everyone else permission to stop pretending. That's when trust and candor build. That's when teams loosen their shoulders and create.

AI can simulate human emotions like empathy, but it can't carry the weight of vulnerability. It can't blush or deal with a rush of emotions. It can't feel regret or guilt. It can't tell you, "I messed up, and here's how I'm going to make sure it does not repeat." That remains uniquely human.

I've written about this dynamic between human thinking and machine output in From Prompt to Production — the gap between what AI generates and what humans engineer is fundamentally about handling failure states, edge cases, and the messy reality between A and Z.

The courage of pursuing the unknown, and the risk of not

AI is bound by its data and its training. It avoids risk because risk is, by definition, stepping into territory with no precedent. Humans, on the other hand, have always pushed into the unknown. Sometimes with great triumph, sometimes with an even greater failure.

But that willingness to err is where courage lives. Every moon landing, every groundbreaking novel, every social movement worth remembering started with people willing to risk getting it wrong. Without the possibility (and acceptance) of error, there's no real possibility of greatness and progress.

Consider this When was the last time an AI took a risk that had no precedent? It can't — because risk requires skin in the game, something only humans can put on the table.

Imperfections make meaning

Perfect answers are useful, but they're not memorable. ChatGPT always says that you're absolutely right, whether you ask it a genuine doubt or something you don't fully understand yet. What sticks with us are the jagged edges—the story of someone fumbling, recovering, and growing. Our mistakes become our myths. They're the way we teach lessons, pass on wisdom, and make sense of the chaotic world around us.

AI may win at precision, but it can't create meaning from imperfection. That's the human domain.

This is also why I believe the no-code and vibecoding movement is so powerful — it's not about eliminating human thinking from the process. It's about giving more humans the tools to make imperfect, iterative, courageous attempts at building something real.

To err is human—still

So maybe the point isn't to compete with AI on its terms, in the game it chooses. Maybe the point is to double down on ours. To embrace the places where we are fallible, because those are the places where we stand out.

To err is human. And in a world of perfect AI, it's also what makes us irreplaceable.

If you want to explore how this philosophy applies to building real products, check out my deep dive on the real difference between design and engineering and why the messy middle is where value actually lives.